The Miracle of Apcera and AWS Lambda

I am not a coder. But each time I come across other's wisdom and knowledge, I hold the power to set it free. As a blogger, I tear off a chunk and give it away to the people in need just like me. I discovered a sort of miracle and I want to share it.

The first question is always Why? People don't buy what you do, they buy why you do it.

|

| Simon Sinek Golden Circle |

Why AWS Compute Services?

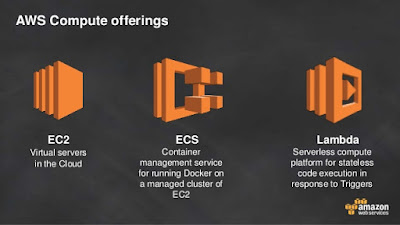

AWS started with EC2; you select a number of virtual servers and run applications.

In a client-server model, the server-side application is a monolith that handles the HTTP requests, executes logic, and retrieves/updates the data in the underlying database.

The problem with a monolithic architecture, though, is that all change cycles usually end up being tied to one another. A modification made to a small section of an application might require building and deploying an entirely new version. If you need to scale specific functions of an application, you may have to scale the entire application instead of just the desired components.ECS is Amazon Docker container management service. I have talked about it in Why Docker is a winner versus VMs

Why AWS Lambda?

Lambda AWS eliminates the old way of orchestrating workflows...

|

| Slideware AWS |

|

| Slideware AWS |

Amazon created a new pricing model, based on the number of requests for your Lambda functions and the time your code executes. The examples on the web site show reasonable costs, but the storage S3 and Dynamo costs are not included.

It makes auditing the costs of AWS services even harder then before.

Why AWS Lambda runs well microservices?

While there is no standard, formal definition of microservices, there are certain characteristics that help us identify the style. Essentially, microservice architecture is a method of developing software applications as a suite of independently deployable, small, modular services in which each service runs a unique process and communicates through a well-defined, lightweight mechanism to serve a business goal.The communication is essential. I heard that Lambda is like a modern throw back to Message Oriented Middleware (MOM). It abstracts out any transports and just has code fragments that respond to events/requests; This is very relevant to Microservices.

How the services communicate with each other depends on your application’s requirements, but many developers use HTTP/REST with JSON or Protobuf. DevOps professionals are, of course, free to choose any communication protocol they deem suitable, but in most situations, REST (Representational State Transfer) is a useful integration method because of its comparatively lower complexity over other protocols.

My kitchen wall clock is atomic

In a very simple way, I experience a microservice in my kitchen. I have a $25 wall atomic clock, which changes for Daylight Saving Time twice a year. I could have two monolith apps: one for summer and one for winter. Yet my clock receives a trigger from Boulder Colorado and uses one application only. The latest NIST-F2 standard is accurate 1 second in 300 million years.This type of time accuracy is probably important to know how long I should boil an egg :-)

On a more serious tone, here is a sample of the logic used before implementing a new microservice:

Imagine Company X with two teams: Support and Accounting. Instinctively, we separate out the high risk activities; it’s only difficult deciding responsibilities like customer refunds. Consider how we might answer questions like “Does the Accounting team have enough people to process both customer refunds and credits?” or “Wouldn’t it be a better outcome to have our Support people be able to apply credits and deal with frustrated customers?” The answers get resolved by Company X’s new policy: Support can apply a credit, but Accounting has to process a refund to return money to a customer. The roles and responsibilities in this interconnected system have been successfully split, while gaining customer satisfaction and minimizing risks.

Lambda versus Apcera

Apcera NATS is faster than Lambda based MOM

|

| Chart source: bravenewgeek.com/dissecting-message-queues |

The transport of NATS is interesting, but not important. It does mean that adding a new source event type is trivial, and that with NATS performance and scale come for free. As a developer you do not need to be worried about the transport.

Other significant differences

Apcera layer abstracts the infrastructure not only inside AWS.

- Apcera will be multi-cloud, and can run on-premise as well as multiple cloud providers. Lambda is not

- Apcera can generate source events from anything you want, and process them anywhere you want. Lambda can only generate pieces of code for microservices

- Apcera can code the policies for all AWS apps if the are linked to their platform (not only Lambda)

Why not use Apcera to manage the Lambda function within AWS?

Good question. Apcera can run any app on any supported cloud with no modification.

As Apcera is the backbone of Ericsson's digital industrialization software stack, it can use Lambda to help AWS expand outside AWS customers traditional boundaries.

The most awesome of miracle reveal the infinite possibilities within the finite nature of everyday things. Quoting the blogger Peter Linden from Ericsson:

What is open source?

Apcera's real value proposition

Apcera creates an OSS (Open Source Software) Ecosystem, true multi-cloud, with the ease to add new events to any system. (see the definition at the end)

AWS can use this platform to reach any datacenter outside Amazon circle of direct customers. It is the first step to create virtually a data center platform for the Earth planet and beyond

It is a miracle

AWS reputation in cloud plus Apcera can spawn a string of miracles that blend seamlessly into the order of things. They break no rules, but change everything.The most awesome of miracle reveal the infinite possibilities within the finite nature of everyday things. Quoting the blogger Peter Linden from Ericsson:

The most exciting part of both the present and the future of data centers is the construction of distributed data centers. Data centers are being brought closer and closer to users to improve their user experience. ...Popping up in our urban neighborhoods like convenience stores, they will be designed to support the most frequently used digital services in our ’hoods as a start. Imagine a network end-point rolled into a neighborhood in a few freezer-sized cabinets, simple to place with easy access to power, cooling and connectivity.Common sense is strengthened by joy. Just google to see who said these words two hundred years ago.

Definitions

Here is the definition of an open source software ecosystem from a paper by Walt Scachi, from University of California at Irvine.What is open source?

● Open source software (OSS) denotes specifications, representations, socio-technical processes, and multi-party coordination mechanisms in human readable, computer processable formats.

● Socio-technical control of OSS is elastic, negotiated, and amenable to decentralization.

● OSS development subsidized by participants.What is a (software) ecosystem?

● An ecology of systems with diverse species juxtaposed in adaptive prey-predator food chain relationships.

● Economic network of processes that transform the flow of resources, enacted by actors in different roles, using tools, to produce products, services, or capabilities.

● Software supply network of component producers, system integrators, and consumers.

Comments